I just taught at ETH the basic result of the theory of Hilbert spaces that states that, given such a space H, a vector v in H and a closed, non-empty, convex subset C, there exists a unique vector y in C such that

and which deserves to be called the orthogonal projection of v on C, as the picture tries to illustrate:

Having done this, I looked for counterexamples to illustrate that this natural statement does not extend to the Banach space setting; it is easy enough to find situations where the minimum is achieved more than once (in fact, infinitely often), but slightly more challenging to find one where the minimum is not achieved at all. Here is one (it was in one of the books I use for my course): consider the Banach space c0 of sequences (indexed by n>0) of complex numbers converging to 0, with the sup norm

Consider then the linear map

on c0. It is clear that this is a continuous linear map since it is bounded by 1 on the unit ball, and a moment’s thought shows that in fact we have

because equality would require that u be a constant sequence, and this is impossible if the sequence is to converge to zero, except (of course) when the constant is zero, but then λ(0)=0 anyway….

This is a case where a supremum is not achieved, and so it is easily transformed to what we want by taking, e.g., v=0 and the convex set

Then there does not exist a vector in C minimizing the distance to the origin.

Now, this is probably not too surprising to anyone, but while preparing these counterexamples for exercises for the students of my course, I was reminded of a beautiful approximation result of Chebychev which is probably not well-known (outside the numerical analysis community, where it seems to be standard knowledge) and which gives a particular (important) case outside of the Hilbert space context where the minimization process works.

Consider the (real) Banach space B of real-valued continuous functions on [0,1] with the supremum norm (i.e., the norm of uniform convergence of functions). Fix then a degree d>0. In B, the subspace Pold of real polynomials of degree at most d is a closed (non-empty) convex subset. The approximation problem is therefore, for a given continuous function f, to find the polynomials P of degree at most d which minimize the distance to f:

must be equal to

It is not very difficult to prove the existence of at least one such polynomial P (the idea being to show that the minimization can be restricted to a compact subset of the subspace Pold). It is more difficult to prove unicity, and even more subtle to find the following characterization (which was found by Chebychev): a polynomial P (of degree at most d) is the best approximation to f in this sense if and only if, there exist at least d+2 points, say

such that

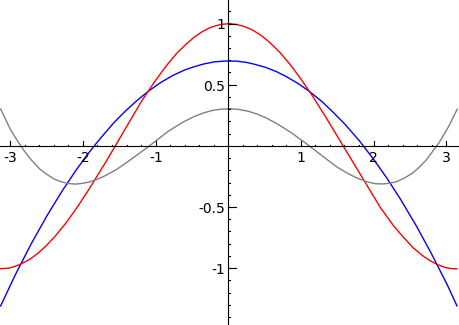

and moreover the signs of f(xi)-P(xi) alternate when i increases. Below, I call the existence of points with these two properties the “d+2 alternation property”; note that it says nothing about the size of the distance from f to P. Here is an illustration:

the red function f is the cosine function, and the blue graph is the best approximation of degree (at most) 3; the gray line is the difference cos(x)-P(x), and the 5 alternating extrema are clearly visible. (I used Maple to construct the approximation, and Sage to plot the graph).

The unicity seems to be typically proved by first showing that there is at most one polynomial with the d+2 alternation property (which is fairly easy; in fact, alternation with d+1 points would be enough), and then showing that minimizing polynomials are characterized by it. The most technical part is the proof that a minimizing polynomial has the required property (if there are less than d+2 alternations, one shows how to improve the approximation).

But the converse (an alternating polynomial is minimizing) looks also quite difficult at first since, as we observed, the defining condition doesn’t say anything about the size of

only that it is achieved at d+2 points. But here is the trick: suppose another polynomial Q (of degree at most d) is such that

Then, at the points xi, the difference Q-P has the same sign as f-P (by writing

and the second summand is not big enough, by (*), to provoke a change of sign). By the assumption of alternation of signs of the d+2 values

(which hasn’t been used yet), this means the polynomial Q-P, of degree at most d, has at least d+1 changes of signs, hence that many zeros, so we must have Q=P, which is a contradiction.

Incidentally, the only reason I know this result is that it was the subject of one of the entrance exams at the French École Polytechnique, and that this exam text was (because of this) used by the teacher of my Math. Spé for one of our weekly take-home exams; the last questions were set up in a challenging way (which I don’t remember, but it must have been the proof of the clever part above), and the result was fixed in my mind because I succeeded in finding the trick…

For a full account, see for instance Analysis of Numerical Methods, by Isaacson and Keller (which I only have in my personal library because it was in the bargains bin in the English bookstore in Bordeaux one day, for some mysterious reason).

Did you pass your entrance exams ?

That exam was one of a previous year; and in fact I ended up not trying to get into École Polytechnique for various reasons…